AI Decoded #3 of 8

This Isnt a Tech Decision — Its a Hiring Decision

LLMs, foundation models, open source, proprietary — these are the engines your strategy runs on. Heres how to choose the right one without a technical background.

Sundar Rajan

Feb 18, 2026

6 min read

Part 3 of 8 — AI Decoded for Founders | Layer 2: The Models

Your team is ready to build. The first question they bring you: which AI model do we use?

You ask for a shortlist. They send six options. GPT-4, Claude 3.5, Gemini 1.5 Pro, Llama 3, Mistral, and two others you've never heard of.

"Which one?" you ask.

"It depends," they say.

This part explains what it depends on — in plain language.

Think of It as a Hiring Decision

Not a technical one.

The model your firm chooses is the AI it will actually work with. And like any hire, different options come with different strengths, different costs, and different levels of control. Some are the equivalent of hiring from a top agency — skilled, immediately available, you pay per engagement, and they keep their methods proprietary. Others are like bringing someone fully in-house — more to manage, but total control over what they do and what they see.

Once you see it that way, the decision gets much simpler.

Here's who's in the room.

Large Language Model (LLM)

The most important AI type in business today.

When someone says "AI" in a business context right now, they almost always mean an LLM. It's an AI system trained on enormous amounts of text — research, articles, books, code — that learned to read, understand, and write at a human level. ChatGPT, Claude, and Gemini are all LLMs.

When your firm uses AI to synthesise market research, draft a client brief, or structure a strategy memo — you're working with an LLM. It reads your request, understands what you need, and writes something new in response. Every time.

Think of it as hiring someone who has read essentially everything ever written. They can work on any topic, in any style, instantly. That's what you're accessing when you connect to an LLM.

Foundation Model

The starting point for every serious AI system.

A foundation model is a large, general-purpose model trained on vast amounts of broad data. Capable of being adapted for many different uses. LLMs are a type of foundation model. You don't build one from scratch — that takes years and hundreds of millions of dollars. You start with one and adapt it to your firm's specific needs.

Your AI research tool doesn't begin from zero. It begins from a foundation model that already understands language, reasoning, and context — and you build your firm's knowledge and working standards on top of that base.

Think of a strong new hire from a good programme. Deeply educated, quick to learn, ready to contribute — but they don't know your firm yet. You onboard them, give them context, and they adapt. That onboarding process, in AI terms, is exactly what Part 4 covers.

Generative AI (GenAI)

The shift that changed everything.

Generative AI creates new content — text, images, audio, code — rather than just retrieving or organising what already exists. This is the category that went mainstream with ChatGPT in 2022. Most AI tools your firm will invest in are generative AI.

The critical difference from older automation: old systems retrieved fixed answers. Generative AI writes a fresh response every time, calibrated to exactly what was asked. Your AI assistant doesn't pull a template and fill in the blanks. It reads your specific brief and writes something new — just for that client, just for that question.

Think of the difference between a filing clerk and a writer. The filing clerk retrieves what's already there. The writer creates something original every time. Generative AI is the writer — and that's why it feels so different from any tool that came before.

Multimodal AI

AI that works across text, images, and more — all at once.

Earlier AI was single-modal. A language model handled text. A vision model handled images. They didn't work together. Multimodal AI handles all of it in one system, simultaneously.

A client sends a 40-slide presentation. Some slides are text. Some are charts. Some are images of competitor products. A multimodal AI reads all of it — the written content and the visual content — and responds with full context from everything it saw. Without multimodal capability, your AI has to ignore the charts and images entirely.

Think of the difference between an analyst who only reads written documents and one who walks into the room, reads the brief, looks at the whiteboard, and interprets the data on the screen — all at the same time. The second analyst sees the full picture. Multimodal AI gives your AI the same range.

Small Language Model (SLM)

Faster, cheaper — built for specific, high-volume tasks.

Not every task needs the most powerful model. Small language models are more compact, significantly faster, and much cheaper to run. They're designed for specific, high-volume, narrow tasks where you don't need the full depth of a frontier model.

Your firm handles 150 emails a day. Many are routine — scheduling requests, document confirmations, status questions. You don't need the most expensive AI for those. A small model categorises and routes them in milliseconds at a fraction of the cost, while your larger model handles complex client situations that actually require depth.

Think of a sharp junior specialist trained for one specific function — handling routine work fast and well, escalating only what needs senior attention. You don't pay a senior rate for a task that a junior handles in ten seconds.

Open Source Model

Full control — and full responsibility.

Open source models (like Meta's Llama or Mistral) make their underlying code and trained parameters publicly available. Anyone can download them, run them on their own infrastructure, and adapt them. The key benefit: your data stays on your servers. Nothing passes through a third-party system.

For a firm handling sensitive client information — strategic plans, M&A research, confidential analysis — this matters. With an open source model running on your own infrastructure, client data never leaves your environment. The tradeoff is that you now own the infrastructure, the maintenance, and all the operational overhead that comes with it.

Think of hiring a contractor who hands you their complete methodology and then joins your team in-house. You own everything. You control everything. Maximum control, maximum responsibility.

Proprietary Model

Immediate capability — minimal setup.

A proprietary model (like OpenAI's GPT-4 or Anthropic's Claude) is accessed through an API. Your system sends a request, their servers respond. You pay per use. They handle all the infrastructure, updates, and maintenance. You focus entirely on what you're building.

Most firms start here — and for good reason. Connect to an API and within days your AI tool is running. No servers to manage, no model to maintain, no infrastructure team required. As you scale, you can evaluate whether data sensitivity or cost savings make open source worth the added complexity. But that's a later decision. Starting with a proprietary API gets you to a working system fast.

Think of a top agency hire. Skilled, immediately available, you pay per engagement. The agency handles their training and keeps them current. You don't own the relationship — but you get serious capability without the overhead.

AGI — Artificial General Intelligence

The one you don't need to think about yet.

AGI is a hypothetical future AI that can reason, learn, and solve problems across any domain as well as — or better than — a human. Not specialised. Not language or images. Every intellectual task, applied flexibly, the way a person would.

We don't have it today. It's a long-term research goal. It will not affect your AI decisions for the next three to five years. Companies claiming it's imminent are, in most cases, fundraising. Knowing that AGI is aspirational — not available, not something you can buy or access — helps you filter the noise and stay focused on what actually exists.

Think of the mythical perfect hire: someone who can do every job at expert level, learn anything overnight, and never need onboarding. Every founder has imagined this person. They don't exist. Neither does AGI — yet.

The Model Decision at a Glance

| What your firm needs | What to consider |

|---|---|

| Get started fast, no infrastructure overhead | Proprietary API (OpenAI, Anthropic, Google Gemini) |

| Client data must stay on your servers | Open source model (Meta Llama, Mistral) |

| Work with text, images, and decks together | Multimodal model (GPT‑4o, Claude 3, Gemini) |

| High-volume, routine, cost-sensitive tasks | Small language model (SLM) |

| Domain expertise specific to your firm | Foundation model + techniques (fine-tuning, RAG, tools) |

| AI that does everything, perfectly, forever | AGI (does not exist yet) |

What this layer means for you as a strategic leader: Don't get caught in the model arms race — a new "best model" launches every few months. The model you choose matters far less than what you build on top of it. Most firms should start with a proprietary API, get something working, and then optimise from there. The real strategic work begins in the next layer — where you make a general AI work specifically for your firm.

What's Next

You now have the model. It's powerful. It's capable.

And it knows nothing about your firm.

It doesn't know your methodology. It doesn't know your clients. It doesn't know the standards your work is held to or the voice your reports are written in. Out of the box, it's a generalist — brilliant at everything in general, specific to nothing in particular.

Part 4 covers the techniques that close that gap. This is where a general AI becomes your firm's AI — and where most of the practical work of building something genuinely valuable actually happens.

We Build AI Employees to Work Alongside Your Team

Want to Have a Strategic Discussion?

Book A Discovery CallDon't miss out on an additional 5x to 10x revenue growth and stay ahead of competitors

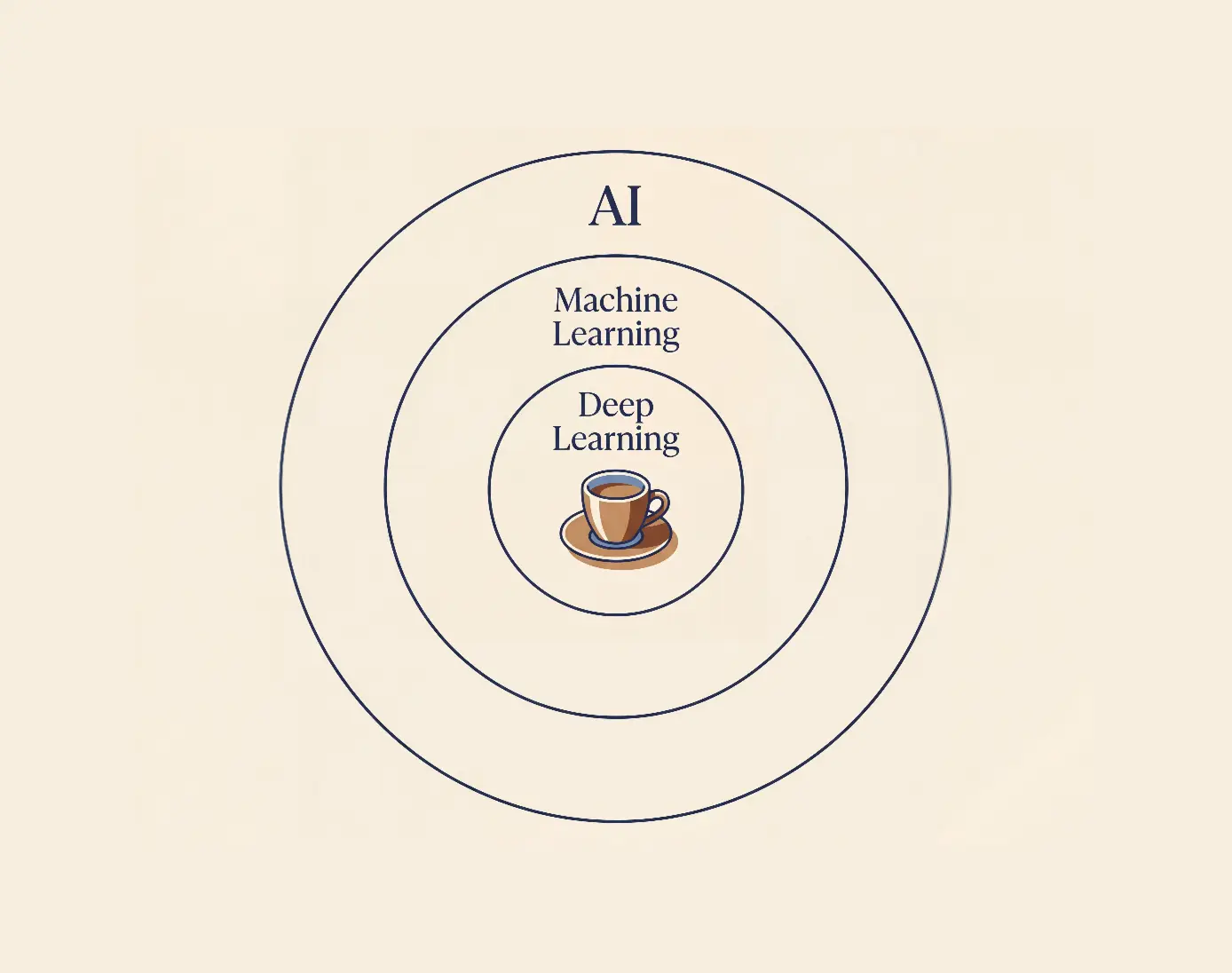

Youre Mixing Up Electricity and Coffee Machines — Heres the Hierarchy

AI, ML, Deep Learning, Neural Networks — everyone uses them interchangeably. Theyre not the same. Heres the hierarchy that makes the rest of AI finally make sense.

Feb 18

•6 min read

Previous Article

Your $200K AI Knows Everything — Except Your Business

The difference between a generic AI and one that works like a specialist inside your firm is the techniques you apply. RAG, fine-tuning, guardrails — explained without the jargon.

Feb 18

•8 min read

Next Article